Container platforms and Cloud Native are the talk of the town, with Kubernetes, or k8s as it is stylized, recently being crowned the victor in the bloody but aptly named ‘Container Wars’. The white flag of surrender for management and orchestration was raised by Docker which now supports Kubernetes natively. Docker is still the container engine of choice though. This means that most Cloud Native systems are going to contain both platforms in a complementary fashion.

Container platforms and Cloud Native are the talk of the town, with Kubernetes, or k8s as it is stylized, recently being crowned the victor in the bloody but aptly named ‘Container Wars’. The white flag of surrender for management and orchestration was raised by Docker which now supports Kubernetes natively. Docker is still the container engine of choice though. This means that most Cloud Native systems are going to contain both platforms in a complementary fashion.

The first 3 blogs in the Open Networking Series saw me cover the basics of open networking, SDN, and then CORD from the ONF. These were firmly within the networking realm of the 3 pillars of infrastructure. With KubeCon and CloudNativeCon (proof that there is nothing Con can’t be added to) Europe approaching in May, the time is nigh to also have a look into storage and compute in the world of open source and open networking. Before jumping straight into it I will explain a little about the concept of Cloud Native and outline the basic differences between containers and virtual machines.

Cloud Native and Containers

The Cloud Native Computing Foundation (CNCF) who run the cloud native project state that, “Cloud Native technologies empower organizations to build and run scalable applications, in modern dynamic environments such as public, private and hybrid clouds. Containers, service meshes, microservices, immutable infrastructure, and declarative APIs exemplify this approach.”

In layman’s terms Cloud Native is a container-based environment with the aim being to develop and run apps, with services, all packaged within those containers. Organizations are embracing this at tremendous pace recently as the conference circuit has shown; and with benefits including lowering costs, decreasing time to market and ease of management it is easy to see why.

In layman’s terms Cloud Native is a container-based environment with the aim being to develop and run apps, with services, all packaged within those containers. Organizations are embracing this at tremendous pace recently as the conference circuit has shown; and with benefits including lowering costs, decreasing time to market and ease of management it is easy to see why.

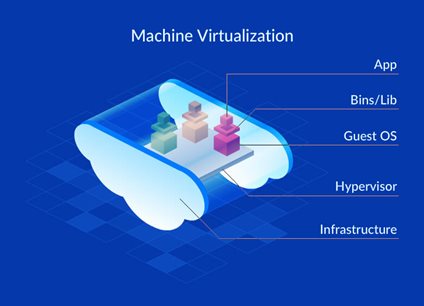

To explain containers, we need to first look at what a virtual machine is. Taking the image above as the example, we have the server as the infrastructure layer and above this we have a hypervisor which adds a layer of abstraction from that server hardware. Nothing above the hypervisor layer will see that the server is there. Above the hypervisor then we have our virtual machines. Each VM will have its own guest OS around the application. This has a touch of Yin and Yang to it in that it means each VM can use a different OS but also carries a lot of overhead.

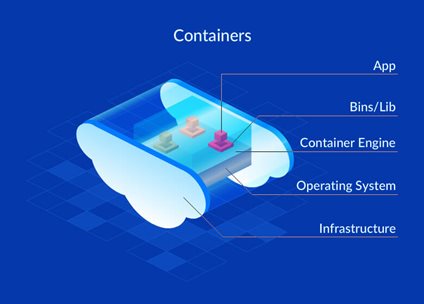

Again, taking the image above as our example we have the server as the hardware infrastructure layer but above this we now have the OS. One of the big differences between VMs and containers is that containers share the host system’s kernel with all other containers. Above the OS layer we have the container engine which we will say is Docker. This engine allows increased agility, portability and the speed at which containers can be created and moved around. The final layer is the container itself. Now that we have covered the basics we can move on to explaining the role of Docker in the container-based environment.

Docker

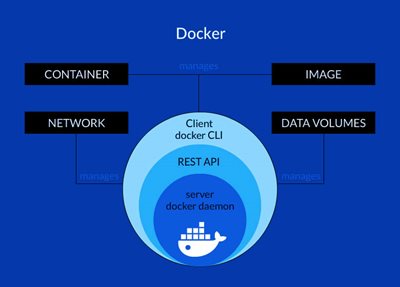

Not to cause any confusion I should state that we are looking at the Docker Engine only. Docker Inc. has many different technologies associated with it, but we will concentrate on the engine that supports the workflows involved in building, shipping and running the container based environment.

Docker Engine is a client-server application that has three main components to it. They are:

The Server – This is a long running background process called a daemon that answers requests for other services. In Docker this is called the dockerd command.

The Server – This is a long running background process called a daemon that answers requests for other services. In Docker this is called the dockerd command.

The REST API – This specifies which interfaces programs use to talk to the daemon, which in turn will instruct it what to do next.

The CLI – The command line interface. This is the client side of the equation. This is called the docker command.

The client side CLI uses the REST API to control and act with the daemon (server), this can be done through direct CLI commands or scripting. The daemon does most of the heavy work involved in building, running and distributing your Docker containers. Dockers Swarm could be used as the management and orchestration system for the newly created Docker containers, but, as I previously mentioned we will look at Kubernetes for this particular task as it is far more extensive.

Kubernetes

Now that we have created our Docker containers, we want to be able to run multiples of these across multiple machines. We need to figure out how these containers interact with each other, handle the storage requirements, distribute loads between containers and even add or remove new containers as demands change. Step up to the plate Kubernetes!

Kubernetes (from the Greek meaning captain) is the open source container orchestrator of choice and was created by Google in 2014, but is now run by the CNCF. In essence it is a system for running and coordinating a container-based environment across multiple machines, providing scalability, high availability and importantly, predictability. So how does it work I hear you ask?

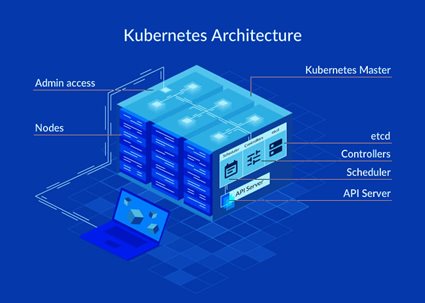

The highest level of abstraction is the Kubernetes cluster. This is a group of servers running Kubernetes on them. Each cluster requires a server that can command and control within the cluster and this is called the master. Just like in Highlander, there can be only one. This server acts as the brain for the cluster, deciding how to split up resources, health checking and scheduling and importantly orchestrating communication. The remaining servers are called nodes and because of the master they are free to concentrate on accepting and running workloads, creating or destroying containers and so on.

If we wanted to dig a little deeper within the nodes, we have pods. They are the most basic of the Kubernetes objects that can be created and consist of one or more containers but for the beginners guide I think we should leave it there.

Final Thoughts

To describe something as complex as a container-based environment is not an easy task in 1000 words but we will dedicate another blog to it in the coming months. Especially, to how the environment works within the bare metal, Whitebox world of Open Networking and SDN. I will also cover SnapRoute’s newly released Cloud Native Network Operating System (CN-NOS) which leverages this containerized, microservice architecture with embedded Kubernetes. This NOS may prove to be a game changer when it comes to transforming brittle and static networks into agile and dynamic ones as we have seen in the container world.

As always, I would be more than happy to share additional resources with you or for more technical information on products or SDN give me a shout also you can browse our Open Networking products here.

Slán go fóill,

Barry

GLOSSARY OF TERMS

- IoT – Internet of Things

- 5G – 5th generation of cellular mobile communication

- Linux – Family of free open-source operating systems

- ONF – Open Networking Foundation

- OCP – Open Compute Project

- SDN – Software Defined Networking

- Edgecore – White box ODM

- Quanta – White box OEM

- Data Plane – Deals with packet forwarding

- Control Plane – Management interface for network configuration

- ODM – Original design manufacturer

- OEM – Original equipment manufacturer

- Cumulus Linux – Open network operating system

- Pluribus – White box OS that offers a controllerless SDN fabric

- Pica8 – Open standards-based operating system

- Big Switch Networks – Cloud and data centre networking company

- IP Infusion – Whitebox network operating system

- OS – Operating system

- White Box – Bare metal device that runs off merchant silicon

- ASIC – Application-specific integrated circuit

- CAPEX – Capital expenditure

- OPEX – Operating expenditure

- MAC - Media Access Control

- Virtualization – To create a virtual version of something including hardware

- Load Balancing – Efficient distribution of incoming network traffic to backend servers

- Vendor Neutral - Standardized, non-proprietary approach along with unbiased business practices

- CORD – Central Office Rearchitected as a Data Center

- SD-WAN – Software Defined Wide Area Network

- NFV – Network Function Virtualization

- RTBrick – Web scale network OS

- Snap Route – Cloud native network OS

- MPLS – Multiprotocol label switching

- DoS – Denial of service attack

- ONOS – ONF controller platform

- Linux Foundation –

- MEC – Multi-access edge computing

- Distributed Cloud

- COMAC – Converged Multi-Access and Core

- SEBA – SDN enabled broadband access

- TRELLIS – Spine and leaf switching fabric for central office

- VOLTHA – Virtual OLT hardware abstraction

- R-CORD- Residential CORD

- M-CORD – Mobile CORD

- E-CORD – Enterprise CORD

- PON – Passive optical network

- G.FAST – DSL protocol for local loops shorter than 500 metres

- DOCSIS – Data over cable service interface specification

- BGP – Border gateway patrol routing protocol

- OSPF – Open shortest path first routing protocol

- DSL – Digital subscriber line

- Container – Isolated execution environment on a Linux host

- Kubernetes – Open source container orchestration system

- Docker – Program that performs operating-system-level virtualization

- Cloud Native – Term used to describe container-based environments

- CNCF – Cloud Native Computing Foundation

- API – Application Programming Interface

- REST API – Representational State Transfer Application Programming Interface

- CLI – Command Line Interface

- VM – Virtual machine