Who's heard of The Open Compute Project? (Show of hands). A few of you. That's quite alright, you're going to learn about the OCP Project today and what it's doing to transform the data center industry.

The Open Compute Project is a non-profit organization that was founded by Facebook in 2011, and its mission is to release product specifications that the hyperscalers (such as Facebook) were using. They decided to openly share the design of their data center hardware with the market as open specifications for anybody to use.

Since 2011 lots of people have started using Open Source, and there is a large Open Source community made up of various partners that we have, manufacturers and end users – in fact Edgecore Networks and Penguin Computing who are here today are key partners in the Open Compute Project. Anybody can come in and play, you can contribute designs for servers or switches, work with Edgecore or Penguin on design, it’s a very open community.

It's a little bit like the Linux Foundation which started in 2010 except we're more about the hardware than the software, but you'll find that the Linux Foundation and the Open Compute Project are coming together very intimately in the telco market at the moment.

I've been designing data centers for 25 years, but I had my eureka moment when I went to Facebook’s data center in Lulea in the Arctic Circle in Sweden. I was blown away, I just couldn't believe what they were doing. What I'm going to do is show you some of the philosophies that they developed, all these bright young people who came out of university with their PhDs in electronics and computing, they weren’t biased by what went before them, they just reinvented it all.

It's really quite staggering and it really is disrupting the industry. There are large organizations who are trying to ignore the shift towards Open Source, as it will accelerate the decline of their business. Hopefully I’ll give you some of the arguments why Open Source is the way forward.

What I’m going to do today is to try and discredit what we did in the computer rooms (aka data centers) of the 20th century to show you the efficiencies and cost savings that OCP data centers introduce. Here are the assumptions I will reset:

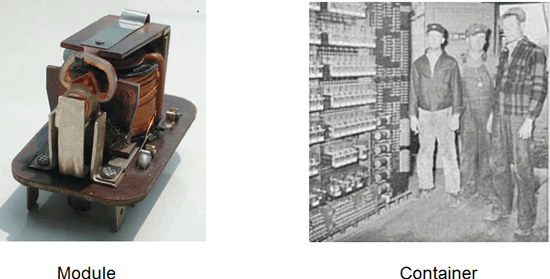

Why do we use a 19 inch racks?

Well we use 19” racks because they are originally ‘relay racks’, because we used to put telegraph and railway electromechanical relays in them which measured 19”. I don't know a single data center today that puts relays in racks today?

Figure 1. Relay Racks

A module today is something like a hard disc drive which is 4 inches wide. If you try to put them side by side in a 19” rack, you only fit 4, however if you make the rails in the rack a little bit wider, just the rails, you fit 5 in. Consequently you increase the amount of hard drives in a rack, so that increases the volume of the rack, and you get 25% more hard drives.

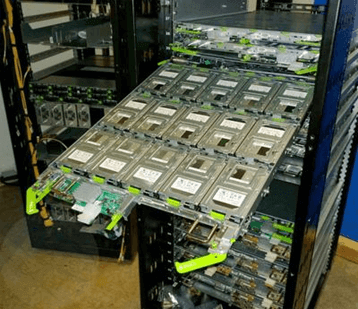

Figure 2. OCP Rack (wider) with 5 Adjacent Modules

So why do we do it? We shouldn’t just keep trying to bend metal to go into a 19-inch rack when you can just make the rails a little wider and optimize it for the products or the modules that you put into it.

An egg box – now this is good design. You’ve got the egg and you design the box to accommodate it. Why have we been doing it the other way around for so long? To me it’s stupid, you'll find most OCP racks have a wider pitch and David Ingersoll from Penguin Computing will emphasize this in his presentation later.

Haven’t got time to read the rest of the transcript? You can view the on-demand recording here now!

Why are cabling interfaces on the back of servers?

It’s because the server is a PC that used to stand on a desk with cables going out the back and we picked it up and put it on a shelf in a rack. That's why the cables are on the back. And we just carried on like that in the 20th century. Between 2008 and 2011 all the big hyperscalers said “why are we doing this?”, and “why have we got this narrow rack?”, and finally… “I can make it a little bit wider, and why not put the interfaces on the front where they’re easier to access?”.

Now this is what the OCP Community have done, and they’ve done much more than that. They have eliminated all of the unnecessary items in the server. There's no power supply in an OCP server, there’s no AC input into the server. There are no management ports, we don’t need them anymore. When did you last plug a keyboard, a video or a mouse into a server inside a computer room? You don't do it, yet we've got all these ports still on traditional servers. The graphics card elements on a server consumes power, it costs money, just take it out because you never use it. All of the supplementary Ethernet ports have all gone and we used to have four at the back to the server! This is the OCP approach, it is about engineering, specifically value engineering.

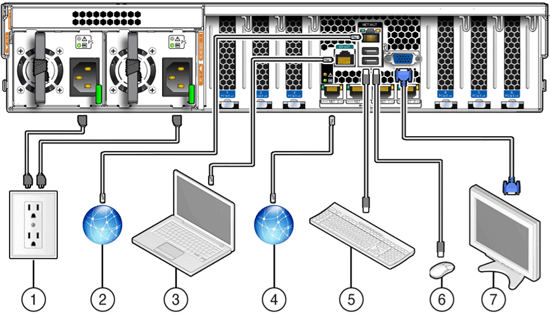

Figure 3. Traditional Server with Redundant Ports

The only thing that really exists on the server is the LAN port which goes on the front of the server now, and it comes out and goes up onto the top of rack switch which is probably going to be an Accton/Edgecore type switch - Open Source hardware.

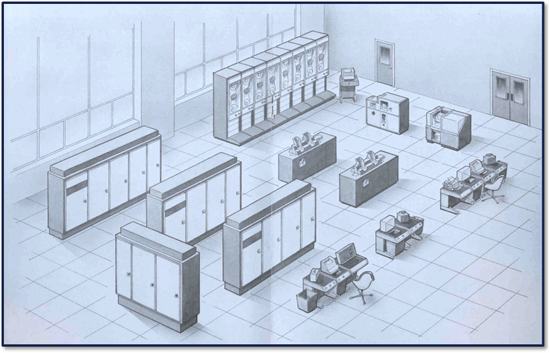

Why do we use access floors in computer rooms?

Here is an example of an IBM mainframe room, and the mainframes were water cooled. They used the access floors to run the water cables and the water pipes under the floor because if they ran them over the top and they leaked, it would destroy the IT equipment.

Figure 4. IBM Mainframe Room Illustration

In OCP data centers you don’t use access floors because it’s an inefficient way of supplying cooling into a data center. Air buoyancy means that it’s better practice to pour cool denser air down into warmer room, and not blow it up from the bottom.

Why do we have tight control on relative humidity and temperature?

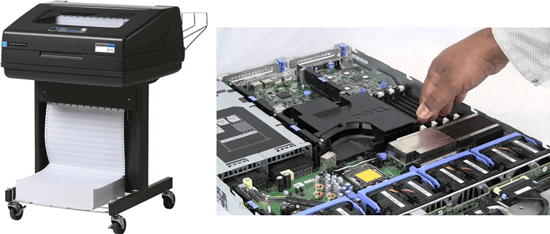

Because in old computer rooms we had paper and printers, and if you expose paper to too much humidity it curls and jams the printer. That’s the reason. And the other reason is that in traditional servers the airflow is so constrained that you need to maintain a very low front-end temperature on them. You take OCP servers today, the most modern ones; I think with Penguin Computing’s servers you can go up to 30 degrees on the intake.

Figure 5. Obsolete Printing Paper Required Low Humidity & Constrained Airflows Require Low Temperature

Now 30 to 40 degrees on the intake means you don't need any more refrigeration or cooling in the data center. You can just run them with normal air.

Why do we have centralized UPS and chillers in Data Centers?

Well with an OCP data center you take them all out. Every time Microsoft builds a data center today, they save $33 million because they take this stuff out. Reduce the facility cost and footprint by eliminating centralized UPS, and migrate to distributed UPS within IT load - smaller failure domain, improved uptime. It's a whole different way of doing things.

Why rapid break fix and hardware redundancy?

You know why do we work so quickly to fix things? In the traditional data center there’s screws, nuts, bolts, washers, tools, they're very very complex. There's an infinite amount of rack configurations, every rack is different. They’re very complex because they keep increasing materials because they're using hardware redundancy. Resilience is a hardware redundancy.

If you look at an OCP data center, there are no tools, a condition of being an OCP-approved device is that they have green touch points. You press the green touch points, and the disk comes out. It is tool-less design, you don't even need an instruction manual to repair it.

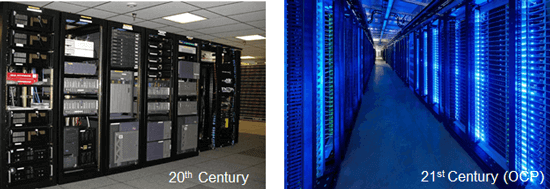

In the whole of the estate of Facebook, they’ve got hundreds of thousands of racks and millions of servers, but there are only six rack configurations. It's just so simple. If you look at the engineer to physical server hardware in a typical enterprise data center, it might be 1 full-time technician to up to 200 servers. Facebook achieves a ratio of one technician to 25,000 physical servers. It's one hundred times less people, so it's not just the technology cost-reduction; there’s a massive impact on the reduced staffing costs.

Figure 6. 20th Century Data Center versus OCP Data Center

In OCP data centers resilience is based on a combination of hardware and software. Some of the big hyperscale data centers run a piece of software called Chaos Monkey, which goes around trying to shut the data center down, and everything is automated and they can compensate. When there is a failure in one of those data centers, they worry less about it because they have a resilient network based on software and hardware. The Open Source Ethernet CLOS fabrics remove the urgent need for break fix maintenance.

In Summary

The move to Open Compute is happening now. The Telco industry has been through some major disturbances recently, and in 2016 the world's main Telcos decided they're going to change all their telephone exchanges into OCP data centers, with the help of the Linux Foundation on a project called CORD and the OCP Foundation with TIP (Telecom Infra Project).

OCP is resetting people’s 20th century mind-sets so they can move to the 21st century’s Open Source data centers – moving away from Black Box proprietary hardware solutions that lock-in the user and benefit the few at the expense of the majority.

About EPS Global

EPS Global is an OCP Community Member, and we bring Software Defined Networking (SDN) hardware and rack integration solutions to the market through our OCP-approved suppliers. We have the warehousing facilities and logistics experience to provide stock availability in our 3 distribution hubs worldwide, enabling single shipments and corporate branded packaging where required, minimizing lead times and eliminating the hassle of sourcing from multiple vendors. We have local language and currency support in each of our 28 global locations, ensuring you always have access to friendly customer support to deliver your OCP-approved hardware solutions regardless of your location.

Contact us now to request an SDN Evaluation Bundle.

John Laban’s slides, along with other speakers from Datacenter Transformation 2016 are available here.