The benefits of Artificial Intelligence and Machine Learning have been widely discussed by theologians in every drinking establishment around the world since ChatGPT burst onto the scene in late 2022. Whether you think it’s a divine gift from God, or the spawn of Satan, it’s here to stay and will increasingly impact on our lives.

This blog is not about the advantages or disadvantages of AI or Machine Learning, but the networking technology behind these shiny platforms. These platforms reduce human error, work 24/7, assist in medical diagnoses, and writes us delicious recipes like this pie below!

(This was after inputting 30,000 cookbook recipes and asking it to create its own)

“Beothurtreed Tuna Pie.” Want to make it? You’ll need the following ingredients:

- “1 hard cooked apple mayonnaise”

- “5 cup lumps; thinly sliced”.

- “Surround with 1½ dozen heavy water by high and drain & cut in ¼ in. remaining the skillet”.

All joking aside, this is serious technology, and while only in its infancy, it has started to make the world a better place for us all.

Moving behind the scenes within the datacentre, we have GPUs or Graphics Processing Unit. Nvidia owns about 90% of this market with AMD picking up the loose change. GPUs are intrinsic to AI’s working as, on a simplistic level it will handle multiple tasks at once using parallel processing. This processing power is hugely important, but so is the network behind it.

What is the recipe of hardware and software that allows this AI powerhouse to bear fruit?

Hardware

The variety of open networking hardware available now is a testament to organisations like the Open Compute Project (hyperlink), and I’ll whisper this one, Meta. Facebook, as it was then, started the OCP in 2012 with the goal of disaggregating the hardware from software. This would allow the hardware OEMs to move at a greater pace, and the software guys to concentrate on what they are good at, software. The hardware vendors were, at that time, all using the Trident and Tomahawk series of ASICs from Broadcom anyway, thus began a standardisation of sorts . The benefit for hyperscalers, like Meta and Google, would be that they could buy their hardware from multiple vendors at a lower price due to increased competition.

The variety of open networking hardware available now is a testament to organisations like the Open Compute Project (hyperlink), and I’ll whisper this one, Meta. Facebook, as it was then, started the OCP in 2012 with the goal of disaggregating the hardware from software. This would allow the hardware OEMs to move at a greater pace, and the software guys to concentrate on what they are good at, software. The hardware vendors were, at that time, all using the Trident and Tomahawk series of ASICs from Broadcom anyway, thus began a standardisation of sorts . The benefit for hyperscalers, like Meta and Google, would be that they could buy their hardware from multiple vendors at a lower price due to increased competition.

On the hardware vendor side, we have Asterfusion, Celestica, Delta, Wistron, Quanta, Micas, Ruijie, and the 2 market leaders in Edgecore Networks, and Ufispace.

Edgecore Networks

Edgecore were founded in 2010 by the Accton Group, with the intent being to lead the open networking revolution. Accton are an OEM/ODM out of Taiwan that makes switches and routers for just about everybody. They have been the gold standard in open networking since its inception and have added their switch design collateral back into the OCP since 2012. This in turn allows other hardware manufacturers to build switches without a massive R&D overhead. Taking one for the team there you might say!

Edgecore were founded in 2010 by the Accton Group, with the intent being to lead the open networking revolution. Accton are an OEM/ODM out of Taiwan that makes switches and routers for just about everybody. They have been the gold standard in open networking since its inception and have added their switch design collateral back into the OCP since 2012. This in turn allows other hardware manufacturers to build switches without a massive R&D overhead. Taking one for the team there you might say!

Let’s have a look at the new 800G offerings from Edgecore Networks which has been specifically designed for AI/ML use cases as a spine switch allowing the move to 400G leaf switches.

AIS800-64D

The AIS800-64D / AS9817-64D has 64x QSFP-DD800 switch ports with a Tomahawk 5 ASIC from Broadcom. A high-performance, low-latency switch for AI/ML clusters and use as a spine switch. Enables migration to 400G leaf connectivity within the datacenter.

The AIS800-64D / AS9817-64D has 64x QSFP-DD800 switch ports with a Tomahawk 5 ASIC from Broadcom. A high-performance, low-latency switch for AI/ML clusters and use as a spine switch. Enables migration to 400G leaf connectivity within the datacenter.

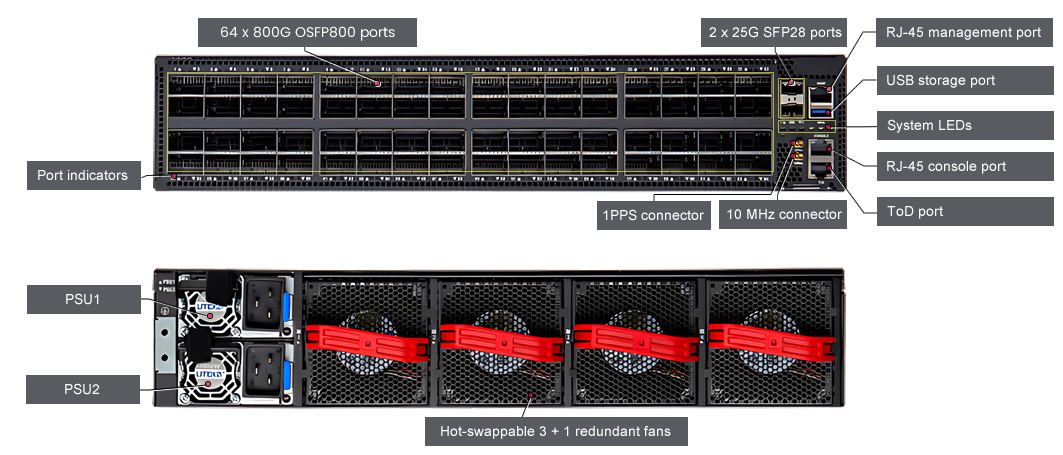

So, we have 64x QSFP-DD800 ports. These can be used at 1x 800G, or via breakout, 2x 400G, 4x 200G, or 8x 100G per port, up to a maximum of 320 ports. That would be a bit of a mess though, right? We have SyncE and PTP support with 1PPS, 10 MHz, and ToD connectors on the front panel. It is in 2 RU form factor and has 2 hot swappable 3000 W PSUs, 4x hot swappable fan modules. On the silicon side, we have a Tomahawk 5, 51.2T capable ASIC with a plethora of new functionality which I can bore you with when you get in touch…

On the software side of things, the switch comes pre-loaded with Open Network Install Environment (ONIE), as all open networking switches do. ONIE is a bootloader that allows us to then install our NOS. The only available NOS for this box will be SONiC and possibly OcNOS from IP Infusion but I will explain that in more detail in the software section.

AIS800-64O

The AIS800-64O / AS9817-64O has 64x OSFP800 ports with a Tomahawk 5 ASIC from Broadcom. Again, for use in high-performance, low-latency AI/ML clusters and use as a spine switch.

As you can see the box is akin to the previous AS9817-64D model. The main distinction being the OSFP800 ports rather than QSFP-DD. These transceivers are similar but OSFP800 will allow something between 12-15W and QSFP-DD between 7- 12W. This box can support 1x 800G (100G PAM4), or via breakout, 2x 400G, 2x 200G, or 8x 100G up to a maximum of 320 ports. As with the other box, we have all our timing supports, hot swappable PSUs and fans, with a Tomahawk 51.2T ASIC from Broadcom. We also have a BMC module for remote monitoring and management of a host system. Software options are limited but I will explain shortly. Get in touch ASAP to demo this equipment!

UfiSpace

For over a decade now, Ufi have been providing end-to-end solutions for telcos, service providers, and data centres. They are an OEM/ODM out of Taiwan like Edgecore. They also play a leading role in Open Networking organisations such as the OCP, and TIP, and are very strong in engineering and R&D departments. We have been working with UfiSpace for about 5 years now and I can’t speak highly enough of their service.

For over a decade now, Ufi have been providing end-to-end solutions for telcos, service providers, and data centres. They are an OEM/ODM out of Taiwan like Edgecore. They also play a leading role in Open Networking organisations such as the OCP, and TIP, and are very strong in engineering and R&D departments. We have been working with UfiSpace for about 5 years now and I can’t speak highly enough of their service.

UfiSpace have 3 models of 800G for us to look at. They have two Tomahawk based switches using QSFP-DD and OSFP800 ports, but also a Trident 5 based leaf or ToR option. Here are UfiSpaces runners and riders in the 800G race.

S9321-64E - QSFP-DD or OSFP

As the name suggests we have 64x QSFP-DD or 64x OSFP ports with a Tomahawk 5 AfSIC from Broadcom. For use in hyperscale data services, and AI/ML clusters as spine switches, providing low latency and ultra-high radix to the aforementioned GPUs. We have 4x Ice lake-D Cores from Intel, hot swappable fans and PSUs, hardware-based encapsulation, enhanced buffering and traffic management, and much more.

As the name suggests we have 64x QSFP-DD or 64x OSFP ports with a Tomahawk 5 AfSIC from Broadcom. For use in hyperscale data services, and AI/ML clusters as spine switches, providing low latency and ultra-high radix to the aforementioned GPUs. We have 4x Ice lake-D Cores from Intel, hot swappable fans and PSUs, hardware-based encapsulation, enhanced buffering and traffic management, and much more.

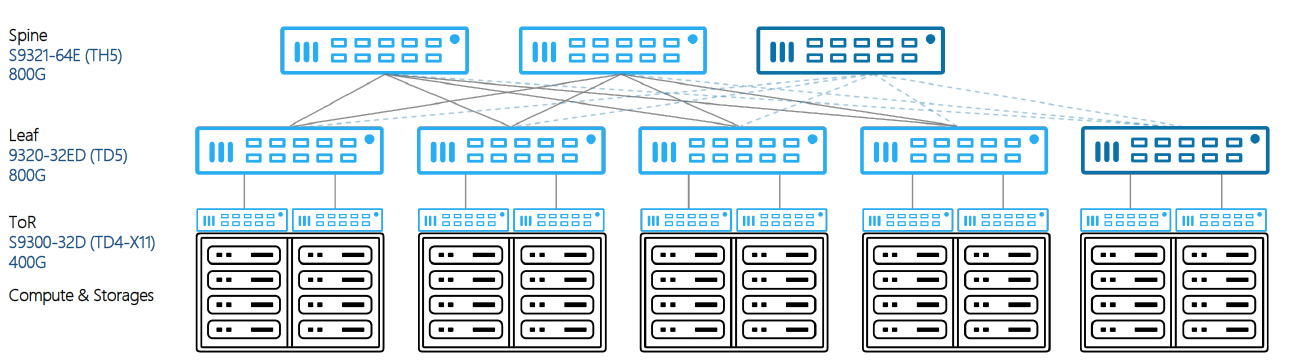

Above we have a sample architecture using the S9321-64E (TH5) as a spine switch, the S9320-32ED (TD5 which we will look at below), and the 400G S9300-32D as our top of rack switch to connect into our 400G GPUs. Using spine and leaf, or CLOS topology as some call it, provides us with a range of benefits.

- Redundancy – Due to the fabric layout, if a spine switch goes down in the DC, network traffic will re-route itself to another spine switch as each leaf is connected to each spine. This eliminates any single point of failure from our network.

- Scalability – It could not be easier! Just add more leaf switches to accommodate increasing capacity. We tell our customers to keep spare leaf switches and due to the low pricing of bare metal hardware this is not Capex heavy.

- Reduced Costs – I have previously mentioned the competition in this sector driving down the price of the hardware for the consumer. There are also no hidden costs when it comes to the software piece of the puzzle. No yearly subscriptions or hidden costs from extra features.

- Minimal Latency – We have additional functionality on the Tomahawk 5 boxes to significantly reduce latency but the topology itself reduces latency and makes switch to switch more predictable. RDMA over Converged Ethernet or RoCE for short, in software will also garner lower latencies.

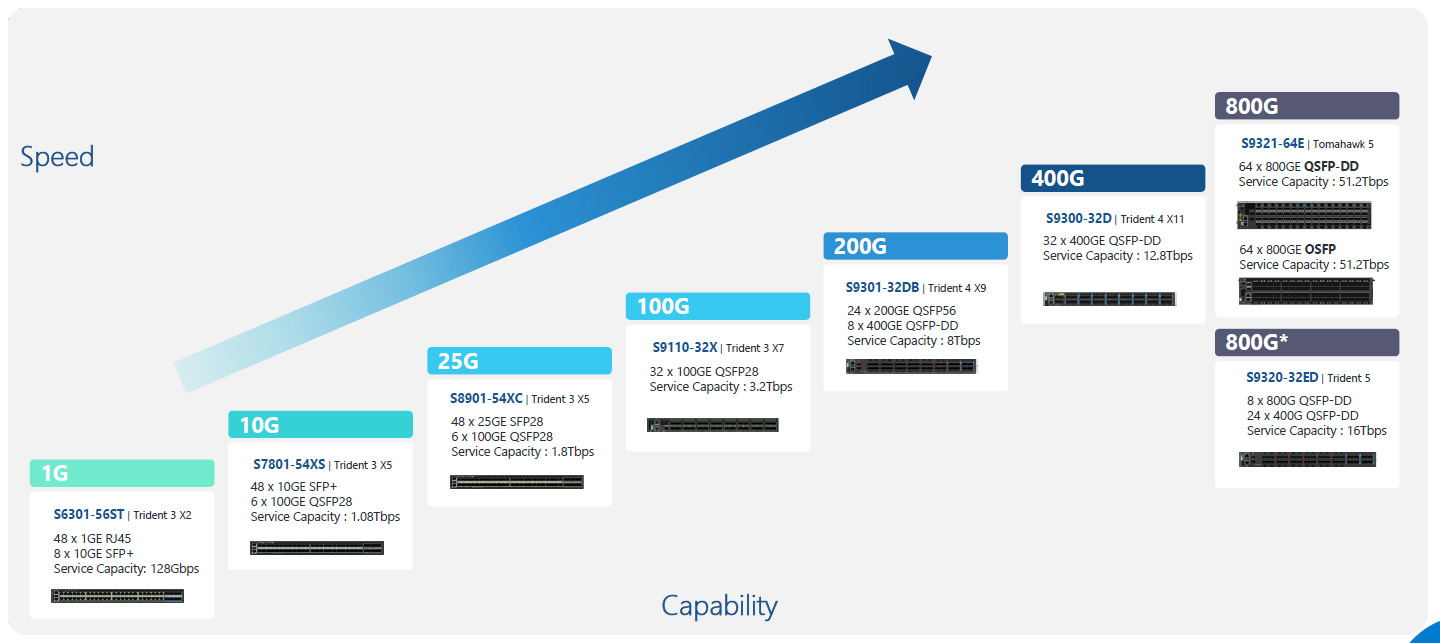

Ufispace have a full portfolio of switches from 1G to 800G, so there is no need to feel left out if you don’t require these incredible speeds. Below is a quick guide to the product set available with ports, speeds, and capacity. If you require any information about any of the switches, please get in touch.

That just about covers the 800G hardware that is available within Open Networking. To summarise we have multiple models using the OSFP form factor, and multiple using QSFP-DD, and a Trident 5 based model for hyperscale leaf use cases. As I always say, they are very expensive paperweights without the software so please stay tuned for part 2 on all things software within the industry.

As usual, I would be more than happy to share additional resources with you or for more technical information on products or SDN, drop me a mail here, (link) also you can browse our Open Networking products here.

Slán go fóill,

Barry